As an independent developer, one of the most significant shifts in the modern workflow isn’t just the capability of Large Language Models (LLMs), but where they reside. Relying entirely on cloud APIs like OpenAI or Anthropic is excellent for production applications, but for daily development workflows, constant API calls accumulate costs fast. More importantly, when we submit our proprietary client code or architectural plans to closed APIs, we forfeit privacy and data sovereignty.

Enter DeepSeek R1. It has sent shockwaves through the developer community by offering reasoning capabilities that punch significantly above its weight class-and crucially, it comes in sizes that we can actually run on local, consumer-grade hardware.

In this comprehensive guide, I’m going to break down exactly how I run DeepSeek locally in my own digital laboratory. We will cover the hardware realities (VRAM is your new bottleneck), the software stack needed to run models efficiently, and how to plug this localized intelligence directly into your daily IDE workflow without spending a cent on inference.

The Hardware Reality: VRAM is King

Before we touch terminal commands, we have to talk silicon. Running an LLM locally is entirely restricted by your hardware’s memory bandwidth and capacity. Specifically, Video RAM (VRAM) is the deciding factor.

When a model is loaded into memory, it needs space not just for its parameters (weights), but also for the context window (KV Cache) during inference. A 7-Billion parameter (7B) model typically requires around 4-6GB of VRAM when heavily quantized (compressed). The DeepSeek R1 distillations come in various sizes (1.5B, 7B, 8B, 14B, 32B).

Macbook Pro (Apple Silicon): Apple’s unified memory architecture is a massive advantage for local AI. An M2/M3 Max with 64GB of unified memory can comfortably load and run a 32B DeepSeek model with excellent tokens-per-second (t/s).

Windows/Linux Desktop (Nvidia GPU): If you are running an RTX 3060 (12GB VRAM), you can easily run 7B or 8B models. To run 14B or 32B efficiently, you are looking at needing 24GB (RTX 3090/4090) or running dual GPUs.

The Independent Dev Recommendation: Don’t stress if you lack a massive GPU. Start with the `deepseek-r1:7b` model variant (based on Qwen). It is remarkably capable for routine coding tasks and easily fits into 6GB-8GB VRAM pools. Let’s look at how quantization makes this possible.

Understanding Quantization

Quantization is the process of reducing the precision of the model’s weights from 16-bit floating points (fp16) to 8-bit, 4-bit, or even smaller (like GGUF formats). A 4-bit quantized model takes up roughly a quarter of the memory while retaining ~95% of its reasoning capability. For coding tasks where exact logic is required, 4-bit (Q4_K_M) is generally the sweet spot of speed vs. intelligence.

The Software Stack: Running with Ollama

The days of dealing with complex Python prerequisite nightmares, compiling llama.cpp by source manually, and fighting CUDA versions are mostly behind us. For the solo developer looking for a frictionless setup, Ollama is the ultimate tool.

Ollama acts as a lightweight daemon that manages downloading, running, and serving local models through an OpenAI-compatible REST API.

Step 1: Installation & Model Pulling

First, download Ollama from `ollama.com` for your OS. Once installed, open your terminal and pull the model.

# For the 7B distillation, perfect for standard local environments

ollama run deepseek-r1:7b

# For those with Apple Silicon (32GB+) or RTX 4090s wanting heavy reasoning

ollama run deepseek-r1:14b

When you run this, Ollama downloads the GGUF file and automatically starts the interactive terminal prompt. But acting as a terminal chatbot is only scratching the surface.

Step 2: The Local API

The real power unlocks when we treat our local machine as an API endpoint. By default, Ollama serves on `http://127.0.0.1:11434`.

Here is an example of querying your local DeepSeek model using a simple Node.js script. This is the foundation for building your own automation tools:

// queryLocalModel.js

const fetch = require('node-fetch');

async function askDeepSeek() {

const prompt = "Write a highly optimized WordPress WP_Query for retrieving 5 custom post types titled 'portfolio', ordered randomly.";

const response = await fetch('http://127.0.0.1:11434/api/generate', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({

model: 'deepseek-r1:7b',

prompt: prompt,

stream: false

})

});

const data = await response.json();

console.log("DeepSeek Response:\n", data.response);

}

askDeepSeek();

Notice the latency when running this script? It’s zero network overhead. It’s just bare metal computation.

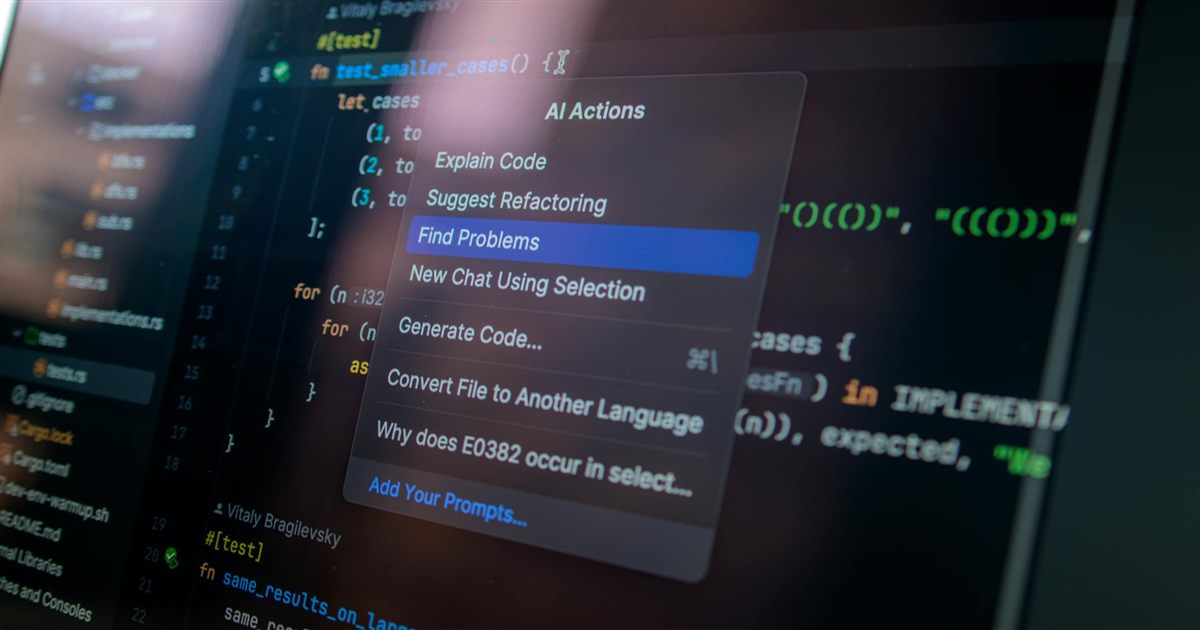

Practical Application: Replacing Cloud Copilots in Cursor IDE

As independent developers, our workflow heavily revolves around IDE integrations. While Cursor defaults to Claude 3.5 Sonnet or GPT-4o, you can route it to your local DeepSeek instance to handle standard autocomplete or code explanations-saving premium API credits for complex architectural overhauls.

1. Open Cursor Settings > Models.

2. Enable OpenAI-Compatible Providers.

3. Add `http://localhost:11434/v1` as the Base URL.

4. Override the model name and enter `deepseek-r1:7b`.

5. Turn off the standard AI models in the UI and test a generation.

The “Aha!” Moment: R1 models are “reasoning” models. Because standard IDE integrations don’t always parse the `

Best Practices & Gotchas

Through extensive local testing across various client projects at Nassim Studio, here are the hard-learned lessons:

Context Window Limitations: Local models inherently have smaller functional context windows before VRAM taps out. If you paste a 5,000-line minified React bundle, a 7B model running on 8GB of VRAM will likely hallucinate or crash the daemon. Keep context concise. Provide only the relevant functional components.

Temperature Tuning: DeepSeek R1 shines at reasoning out logic. Keep the temperature low (`0.1` to `0.3`) for strict coding tasks. High temperatures make reasoning models volatile as their logical chains break apart.

Model Unloading: Ollama keeps the model loaded in RAM for 5 minutes after the last request by default. If you switch to playing a heavy videogame or compiling a massive docker image, you might wonder where your RAM went. You can force-unload models if needed or change the `OLLAMA_KEEP_ALIVE` environment variable.

Conclusion: The Sovereign Developer

Running DeepSeek locally represents a paradigm shift for independent web developers. We are no longer entirely tethered to subscription architectures for day-to-day coding queries. By localizing our intelligence stack, we gain privacy for sensitive client projects, immunity from API downtimes, and a truly independent digital laboratory.

Leave a comment

Your email address will not be published. Required fields are marked *